Database buffer -B change slow downs QAD programs

Do you have evidence that the slow downs were caused by, and not merely coincident with, the configuration change? Do you know with certainty that nothing else changed at that time, either in the database or in the wider environment? E.g. OS, network, server workload, virtualization, storage, etc. Don't conflate correlation with causation.

So i found out that the rule of thumb is -B parameter should be 10-15% of database size.

This is outdated guidance. The appropriate size of the buffer pool for a given database instance really depends on how the client applications are written and how the users behave, in addition to the database size. The database size puts an upper bound on the appropriate buffer pool size. If a database is very large but is mostly historical data, and the users only read very recent data, then the working set of the application, i.e. the data that is cached in the buffer pool as a result of user activity, may be quite small in comparison.

But if clients read a lot of data then the working set of the applications is much larger and the database and its users could benefit from a much larger buffer pool. Note that this can happen even if the users aren't

attempting to read large quantities of data, for example if the queries are poorly written by the programmer, or if the schema doesn't provide appropriate indexes to match the query predicates. Business users given free rein to run ad hoc queries in a BI tool are also a common cause of this.

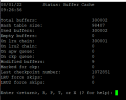

Promon R&D, 1, 7 shows you the current status of the buffer pool. Example:

Code:

07/27/22 Status: Buffer Cache

10:06:22

Total buffers: 3002

Hash table size: 887

Used buffers: 33

Empty buffers: 2969

On lru chain: 3001

On lru2 chain: 0

On apw queue: 0

On ckp queue: 0

Modified buffers: 0

Marked for ckp: 0

Last checkpoint number: 0

LRU force skips: 0

LRU2 force skips: 0

Enter <return>, R, P, T, or X (? for help):

Promon R&D 2,3 shows buffer pool activity and related metrics, including OS reads (reads of a database block from disk).

Code:

07/27/22 Activity: Buffer Cache

10:12:54 07/27/22 10:06 to 07/27/22 10:06 (5 sec)

Total Per Min Per Sec Per Tx

Database Buffer Pool

Logical reads 494 5928 98.80 0.00

Logical writes 0 0 0.00 0.00

O/S reads 26 312 5.20 0.00

O/S writes 3 36 0.60 0.00

Checkpoints 0 0 0.00 0.00

Marked to checkpoint 0 0 0.00 0.00

Flushed at checkpoint 0 0 0.00 0.00

Writes deferred 0 0 0.00 0.00

LRU skips 0 0 0.00 0.00

LRU writes 0 0 0.00 0.00

APW enqueues 0 0 0.00 0.00

Database buffer pool hit ratio: 94 %

This information won't be too meaningful until the database has been up for a while and the users have fully exercised their typical data-access patterns. Note that some of these may be periodic and infrequent, e.g. running weekly/monthly/quarterly reports or updates.

A better rule of thumb is this: given a starting point of your current buffer pool size and your logical and physical (OS) I/O, if you restart your database with a larger buffer pool size and the amount of physical disk reads decreases, given the same logical I/O, then that increase in buffer pool size was beneficial for you. Note that you will not see differences with small increases in -B. Trying doubling -B when testing this, assuming of course you have sufficient available memory.

Remember that the buffer pool is in RAM, so higher utilization means more of the system's memory in use, so more isn't always better. Increasing database memory utilization could in some cases decrease database performance, e.g. if the increase in -B causes increased OS paging, and thus increased disk I/O, or if the increase causes database shared memory to span multiple NUMA nodes. You will need to experiment with different -B values to see what works best with your OpenEdge release, OS, hardware, database, and workload.

Progress version is 10.2b

OpenEdge 10.2B was released thirteen years ago and has been retired for three years. It will never received new OS certifications or new patches for performance, reliability, data protection, or security. The longer your customer uses it, the greater their business risk. They should have a plan in place to migrate to an active OpenEdge release, e.g. 12.2.